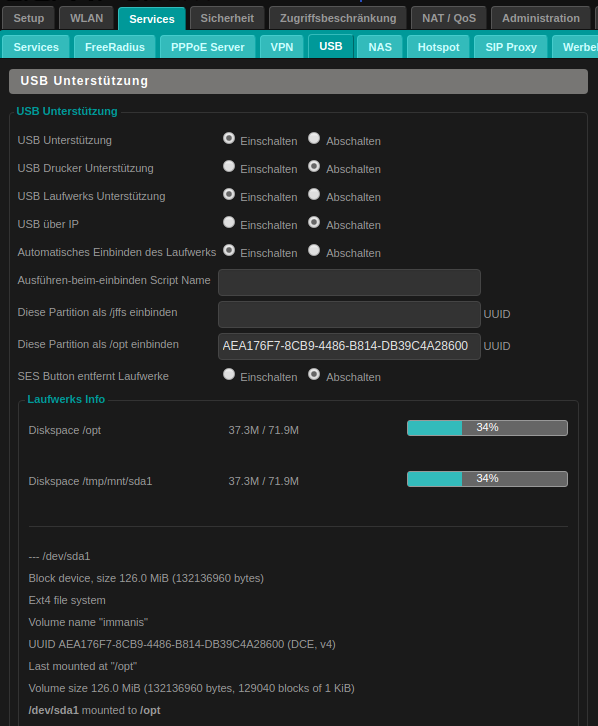

pdf # from within chromiumī.) 6ec4ff88e8884c6 1587e124af2e618 1d wget_user- agent_chromium_ Linux_from_ Scratch. They refer to use with user-agents chromium, falkon and firefox.Ī.) 6ec4ff88e8884c6 1587e124af2e618 1d browser_ Linux_from_ Scratch. System: Linux/Lubuntu 18.04.4 LTS, 64 bitįollowing your suggestion I may now tell you the following:ġ.) detailed output of wget -debug can be found here: We are very interested in why that is and what the technical background may be. The wget-download is (16 bytes larger) due to this append: We´ve also established by the use of a hex viewer that the difference between the two PDFs is thus: pdfįile size: 959314 bytes browser_ Linux_from_ Scratch. pdfįile size: 959330 bytes wget_Linux_ from_Scratch. Via browser-download (chromium) and wget and found out that the PDF-file obtained via wget is exactly 16 bytes larger than the PDF we got via the browser.ĭa65d66d0dfd995 d7fd4f7e7327506 b3 wget_Linux_ from_Scratch. We´ve compared the download of a PDF (example: e-book from distrowatch “Linux from Scratch”, source: https:/ /com/free/ w_linu01/ prgm.cgi? a=1 ) community/ t/minutely- different- results- when-downloadin g-pdf-via- wget-and- browser/ 5128 ).

If you are looking for a method to easily fetch files from a download site, have a look at plowdown, included with plowshare.The following refers not so much to a bug per se (as wget works as desired) but to an interesting phenomenon we´ve been discussing for a while now (see: https:/ /itsfoss. These actions typically make use of Javascript, but there may also be a hidden frame to facilitate these actions. For example, when opening a page, it is possible that a request is performed on the background to prepare the download link. If the connection is not encrypted (that is, not using HTTPS), then you can also use a packet sniffer such as Wireshark for this purpose.īesides these headers, websites may also trigger some actions behind the scenes that change state. You can normally use the Developer tools of your browser (Firefox and Chrome support this) to read the headers sent by your browser. This can be used in a good way (providing the real download rather than a list of mirrors) or in a bad way (reject user agents which do not start with Mozilla, or contain Wget or curl). User-Agent: some requests will yield different responses depending on the User Agent.It can be set in curl with the -u user:password (or -user user:password) option. Authorization: this is becoming less popular now due to the uncontrollable UI of the username/password dialog, but it is still possible.The curl option for this is -e URL and -referer URL. It should not be relied on, but even eBay failed to reset a password when this header was absent.

Referer (sic): when clicking a link on a web page, most browsers tend to send the current page as referrer.Given a cookie key=val, you can set it with the -b key=val (or -cookie key=val) option for curl. Cookie: this is the most likely reason why a request would be rejected, I have seen this happen on download sites.The actual URL I was getting trouble with was this and the curl I ended up with is curl -L -H 'Referer: ' Ī HTTP request may contain more headers that are not set by curl or wget. If anyone is interested, this came about because I was reading this page to learn about embedded CSS and was trying to look at the site's css for an example. The server that checked the referrer bounced through a 302 to another location that performed no checks at all, so a curl or wget of that site worked cleanly. By adding this to the command-line I could get the file using curl and wget. The specific problem I had encountered was that the server was checking the referrer. Thanks to all the excellent answers given to this question. (this is not about being able to get the file - I know I can just save it from my browser it's about understanding why the command-line tools work differently) What other reasons might there be for the 403, and what ways can I alter the wget and curl commands to overcome them? I try again with my browser's user agent, obtained by.

I can view the file using the web browser on the same machine. I try to download a file with wget and curl and it is rejected with a 403 error (forbidden).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed